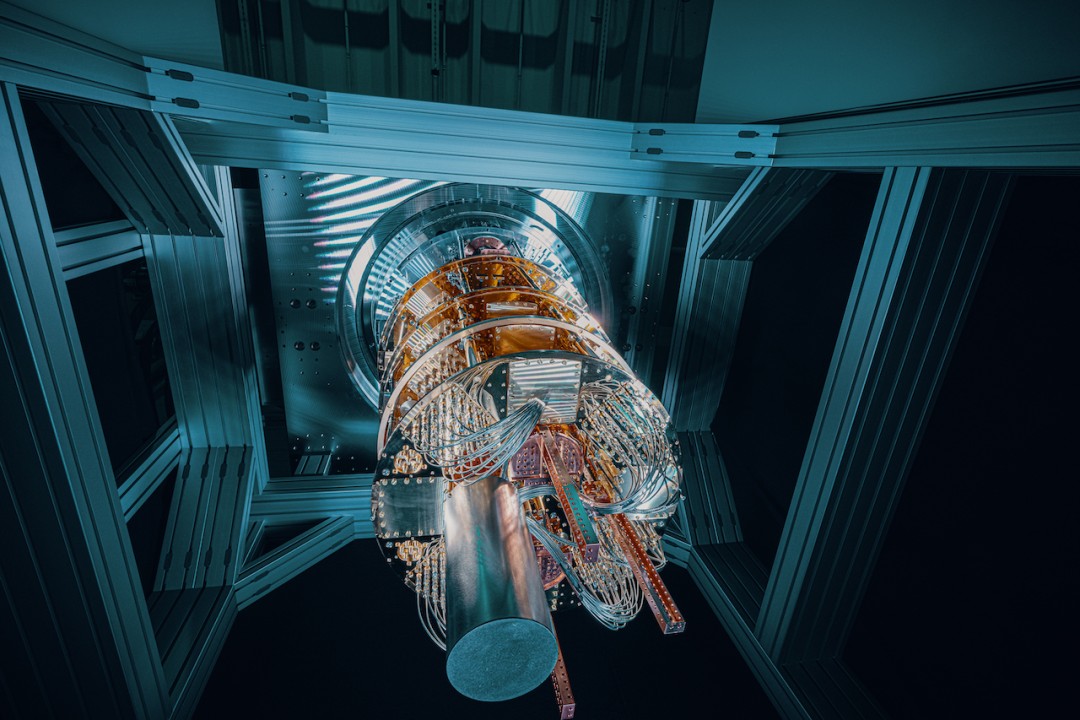

The world is entering a phase where intelligence and computation are being redefined. Artificial intelligence is scaling from pattern-recognition to decision-support at planetary levels, while quantum technologies are moving from lab curiosity to near-term utility across chemistry, logistics, sensing, and—most critically—cryptography. In this period of acceleration, societies need more than momentum; they need meaning, methods, and measurable safeguards. That is the gap the AI & Quantum Futures Alliance (AIQFA) is designed to close: stewarding a future in which capability and conscience rise together.

From principles to practice. Ethics statements are plentiful; operational ethics is rare. AIQFA starts with a pledge anchored in four working principles—human agency, accountability, security by design, and social benefit—then translates them into practical instruments: impact assessments, evaluation protocols, and governance templates organizations can actually implement. Ethics ceases to be a poster on the wall and becomes a workflow in the lab, a criterion in procurement, and a KPI in deployment.

Why “AI and quantum” together? Because the technologies will co-evolve. Quantum algorithms may alter optimization, simulation, and cryptography; AI will accelerate hypothesis generation, experiment design, and control for quantum systems. Governance that treats them separately invites contradictory rules, duplicated effort, and missed synergies. An integrated approach allows shared risk taxonomies (e.g., safety, reliability, misuse), common evaluation infrastructure (testbeds, red-teaming), and joint policy dialogues that anticipate convergence rather than react to it.

Capability without concentration. One of the starkest risks is hyper-concentration of compute, data, and talent. Without deliberate interventions—shared research clouds, transparent model cards, open benchmarks, portable skills—public interest outcomes will be outgunned by closed, capital-intensive initiatives. AIQFA’s compute equity programs, data-trust frameworks, and open evaluation suites aim to broaden participation while safeguarding security and IP. The result: more actors building responsibly, fewer single points of failure.

From risk to resilience. Ethical deployment is as much about building resilience as it is about reducing harm. For AI systems, resilience means robustness across domains, resistance to adversarial manipulation, and graceful degradation under stress. For quantum, resilience means post-quantum cryptography (PQC) migration, supply-chain assurance, and talent continuity. AIQFA’s playbooks codify how to inventory exposures, prioritize interventions, sequence upgrades, and validate outcomes—so leaders can move from hand-wringing to hardening.

Measuring what matters. Governance fails when it can’t be measured. AIQFA promotes outcome-level metrics: how well a medical triage model performs across demographic subgroups; whether a resource allocation system preserves due process; how a quantum-enhanced optimizer affects energy intensity or emissions. We favor audits that check real-world utility, not just benchmark scores, and dashboards that track results over time rather than snapshots. Measurement is built into the lifecycle, not bolted on at the end.

Educating for judgment, not just skills. Technical competence is necessary but insufficient. AIQFA’s education programs embed normative reasoning—about power, rights, fairness—into curricula and professional development. Fellows learn to run a fairness assessment and to explain to a city council why certain trade-offs were chosen. Engineers practice incident response tabletop exercises. Policy students build literacy in model architectures and cryptographic primitives. Leaders learn to ask sharper questions and recognize weak signals early.

Pluralism by design. No single country, company, or campus has all the answers. AIQFA’s governance model is intentionally plural: multi-stakeholder councils, regional nodes, open RFPs for research, and inclusive representation from the Global South. This structure slows groupthink and surfaces context-specific insights. It also provides an off-ramp from zero-sum geopolitics by focusing on shared problems: climate resilience, pandemic detection, critical infrastructure safety, and trustworthy identity.

A portfolio of safeguards. There is no silver bullet. That’s why AIQFA advocates layered safeguards: pre-deployment risk assessments, evaluation suites with robustness and bias probes, incident reporting channels, model usage policies, and clear red lines (e.g., bans on indiscriminate mass surveillance or unconsented biometric inference). In quantum, we push for crypto-agility, hybrid cryptography during migration, and standardized testing for hardware reliability. The portfolio approach ensures that if one layer fails, others stand guard.

From pilots to public benefit. AIQFA operates as a bridge: converting promising lab results into pilots with public agencies and industry partners, then refining them through rigorous evaluation. We publish what works and—critically—what doesn’t, so the field learns. Our aim is to raise the floor of practice, not just celebrate the ceiling of possibility. That is the ethics-first blueprint: capability that is accountable; innovation that is inclusive; progress that is provable.

If the last era optimized for moving fast, the next must optimize for moving wisely. AIQFA’s role is to make “wise” concrete—policies that scale, safeguards that stick, and ecosystems where human dignity stays at the center of technological power.